Home » Blog » Artificial Intelligence

Hermann's Grid and Organizational Systems: How AI Amplifies Structural Design

Artificial intelligence does not correct organizational flaws; it amplifies them. What it reveals about structure, culture, leadership, and decision-making.

Why Choose The Flock?

+13.000 top-tier remote devs

Payroll & Compliance

Backlog Management

Looking at my list of "pendings," I made a connection with an image I saw in a summer book I took to the beach.

What is the Hermann Grid and why is it useful for thinking about organizations?

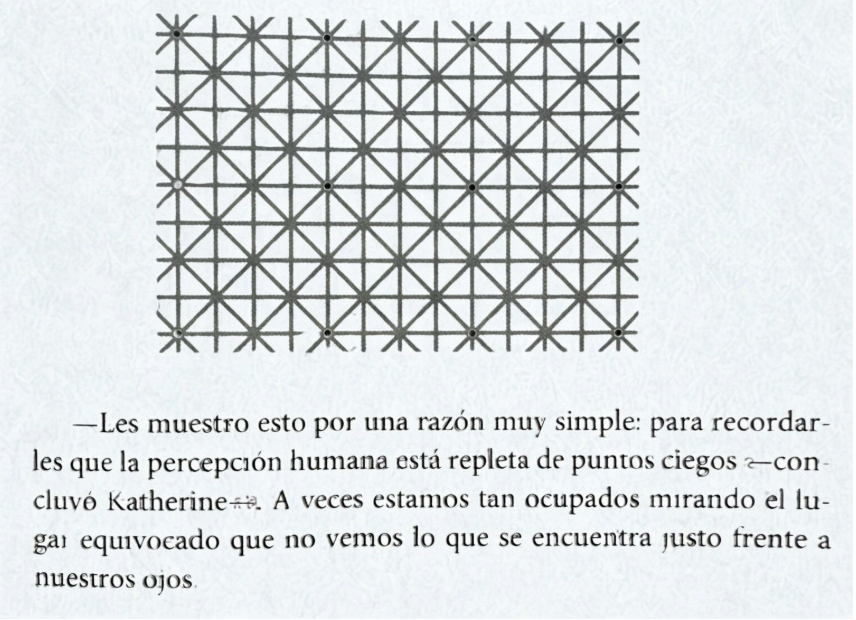

The book is The Secret of Secrets by Dan Brown. It features the "Hermann Grid," an optical illusion characterized by the perception of ghostly gray spots at the intersections of a grid.

What does this have to do with my list of pendings?

In the areas of HR, talent, culture, and communication, we believe we are describing reality when we say or hear "lack of alignment," "that team doesn't deliver," "legal is blocking," "there's no culture," "there's a communication problem." All interpretations of isolated data that do not take into account the system formed by the organizational structure and its culture, the information we have available, and the real incentives.

Today more than ever, that system operates affected by macro uncertainty, institutional crises, digital transformation, artificial intelligence, and the need for new skills. When the environment becomes more uncertain, the system organizes perception to survive, and ghost points appear that lead us to easy culprits, simplified narratives, and biased diagnoses, not considering that the organization is a system where, limited by our perception, we believe something is important but it is not what the system needs. "We need to communicate better!" "We need a policy!" Sound familiar?

Properly aligning the intangibles (culture, talent, innovation, leadership, sustainability, purpose, etc.) requires a well-designed "grid" without broken intersections that hinder coordination. A typical example is believing that when there is a climate of distrust or potential crisis, reputation is maintained with storytelling, and thus, the analysis stops at the communication area. Communication is everything and yet, it does not operate alone.

And how can we not talk about AI! It is a transversal factor that affects the entire grid like blood flowing through veins, amplifying the organizational design that already exists. If the system is confusing, AI scales the confusion. If the system maintains an aligned conversation, then AI scales that goodness.

How do we avoid being biased by one of the points we see on the grid?

There are surely many possible answers; however, I suggest three:

-

Data is not its interpretation. A delay is not necessarily inefficiency; an error is not necessarily a lack of capability. Separating data from interpretation provides clarity.

-

Simplify bureaucracies and reduce intersections between parts of the structure. Clear governance on who decides what, what the criteria are, and decision timelines.

-

Fewer metrics, the ones that matter; review and conversation in leadership with an analysis of those metrics looking at the system, not just the symptoms.

In the end, for me, this is the central idea: organizations are systems that perceive, and when the system is poorly designed, it not only functions poorly but also sees poorly.

Why Choose The Flock?

+13.000 top-tier remote devs

Payroll & Compliance

Backlog Management